War with ClearScore: How Affordability Modelling Misled the Consumer

This entry documents a dispute with ClearScore concerning how it handled a challenge to inaccurate information generated and displayed through its platform.

The issue examined here is not simply the presence of incorrect data, but how ClearScore framed, presented, and defended that data once its accuracy was questioned — particularly where internally generated estimates were presented using language that implied external judgment or lender assessment.

This case is about misframed authority, not affordability in the lender sense.

Context

ClearScore positions itself as a consumer-focused credit platform, designed to help individuals understand, monitor, and improve their credit position. It aggregates credit reference agency data and supplements this with internally generated tools and indicators intended to aid consumer understanding.

One such feature presents an assessment of affordability and disposable income, framed within the platform using language such as “How you look to lenders.”

In doing so, ClearScore occupies an intermediary position:

it generates its own analytical outputs, presents them alongside genuine credit data, and encourages users to interpret the combined view as meaningful to real-world lending decisions.

That positioning carries responsibility — particularly where internally derived estimates are presented in a way that implies external validation or consequence.

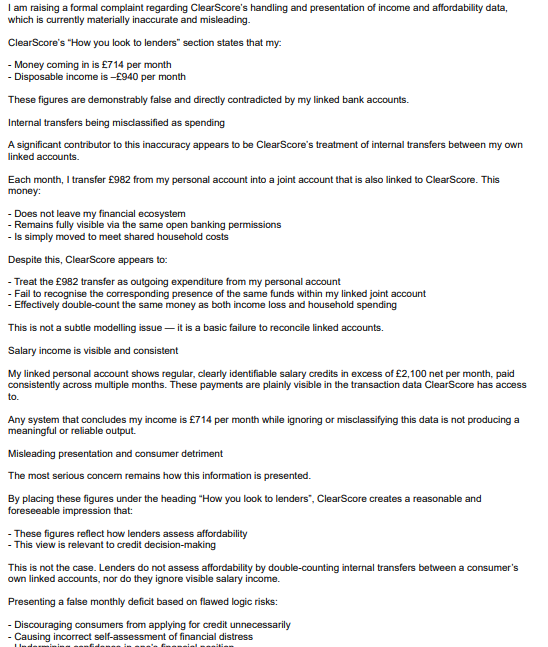

What was challenged

The challenge raised with ClearScore concerned how affordability information was calculated and presented, rather than the existence of an affordability tool itself.

Specifically:

Internal transfers between personal and joint accounts were categorised as outgoing expenditure.

This resulted in double-counting of funds that were not, in reality, spend.

The resulting calculation materially understated disposable income.

The issue was not subjective, discretionary, or a matter of interpretation. The categorisation error was demonstrable when compared against the underlying transaction records.

More importantly, the output of this flawed calculation was framed using lender-facing language, despite being generated entirely by ClearScore’s internal modelling and not used or referenced by any actual lender.

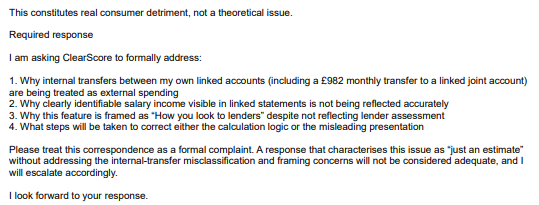

The challenge therefore sought clarity on two points:

Whether the data being displayed met the standard of accuracy required when presented to consumers.

Whether ClearScore accepted responsibility for the framing and impact of its internally generated outputs.

ClearScore handling

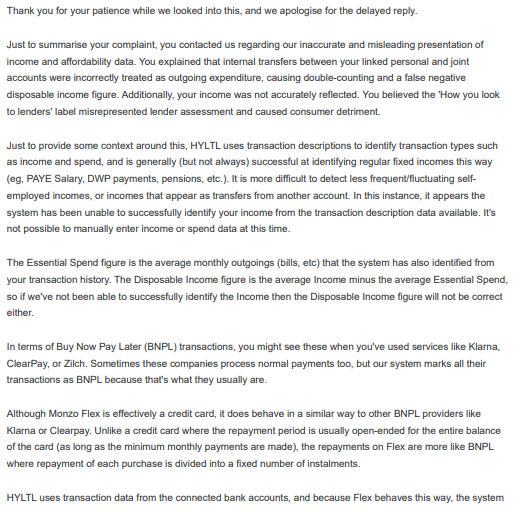

ClearScore’s handling of the challenge relied primarily on process explanation rather than substantive assessment.

While acknowledging that its affordability indicators are derived using internal categorisation logic, ClearScore did not meaningfully address the impact of misclassification on the output presented to the user.

Nor did it adequately engage with the central concern:

that internally generated estimates were being framed in a way that implied how lenders would assess the individual, despite no lender input, reliance, or reference.

Responsibility for resolution was effectively deflected by reiterating the tool’s general purpose, without addressing whether the specific output displayed to the consumer was accurate, fair, or appropriately framed once inaccuracies were identified.

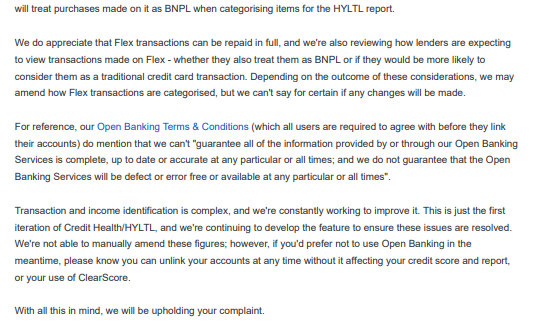

ClearScore response

ClearScore issued a formal response within its complaints framework.

However, the response focused on describing how the tool operates in general terms, rather than addressing the specific inaccuracies raised or the consequences of the presentation used.

The response did not demonstrate that ClearScore had:

Assessed the accuracy of the disputed categorisation.

Considered whether the language used created a misleading impression of lender assessment.

Evaluated the consumer detriment arising from being presented with a false negative affordability position.

In effect, the response treated the issue as a disagreement over methodology, rather than recognising it as a presentation and accountability failure.

Why this matters to millions of UK citizens

Platforms like ClearScore are widely used as reference points for understanding personal financial standing. The language and framing used within such platforms materially influence how consumers perceive their own financial health.

In this case, the combination of:

inaccurate internal categorisation, and

lender-facing framing,

resulted in the consumer being presented with an implied deficit that did not reflect reality.

The practical effect was not merely informational error, but behavioural pressure:

a sense of underperformance,

implied need for corrective action,

and internalised responsibility for a problem that originated entirely within the platform’s own modelling.

When a consumer tool presents internally generated estimates as if they reflect external judgment, the risk is not just confusion — it is misplaced self-blame and unnecessary anxiety, particularly where no corresponding lender data supports the implication.

This case highlights the gap between consumer-facing positioning and accountability when platform-generated insights are challenged.

Current status

At the time of publication, this dispute is partially resolved.

A formal complaint raised with ClearScore was upheld in full. The matter has also been referred to the Financial Conduct Authority for intelligence purposes, and a case reference has been provided.

This entry reflects the position as of February 2026 and will be updated if the position materially changes.

Key themes:

Credit data accuracy · Consumer disputes · UK credit reporting · Regulatory accountability

Complaint page 1

Complaint page 2

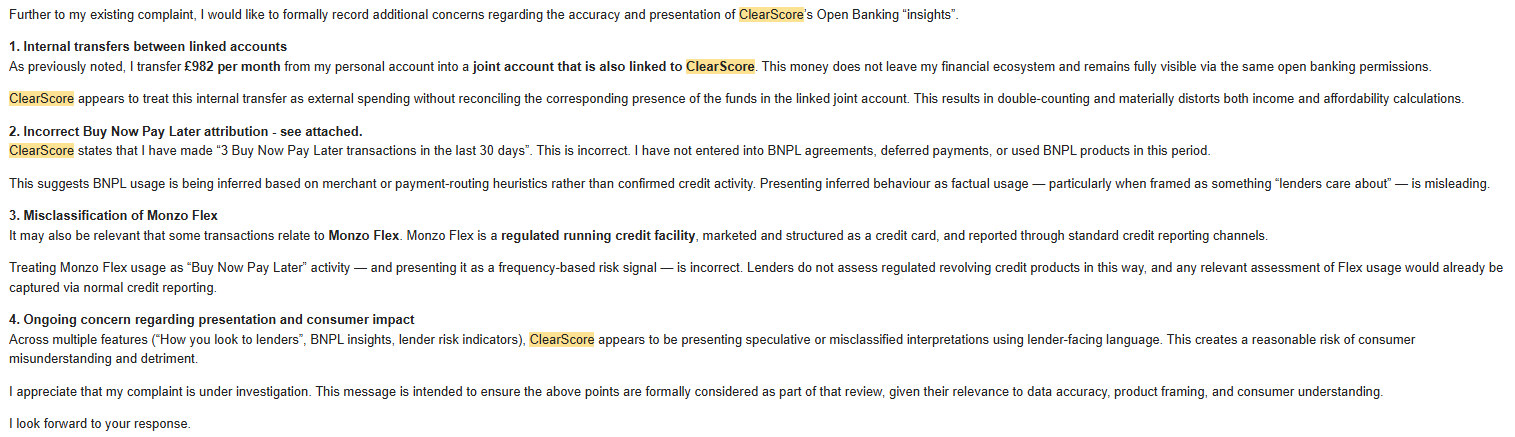

Additional concerns

ClearScore final response page 1 of 2

ClearScore final response page 2 of 2